What is Claude Opus 4.7?

Claude Opus 4.7, released on April 16, 2026, is Anthropic’s most capable generally available AI model to date. It delivers frontier-level performance across agentic coding, long-horizon reasoning, and high-resolution vision tasks — with a 1 million token context window, self-verification behavior, and a new xhigh effort level — all at the same $5/$25 per million token price as its predecessor.

If you’ve been watching the AI frontier race in 2026, you already know the battle between Anthropic, OpenAI, and Google has never been tighter. On April 16, 2026, Anthropic dropped Claude Opus 4.7 — and the release answers one question that developers, startup founders, and enterprise teams have been asking for months: what does it actually take to handle the hardest AI tasks reliably? We’ve gone deep into the benchmarks, the official documentation, early partner results, and head-to-head comparisons with GPT-5.4 and Gemini 3.1 Pro to give you a genuinely useful read on how to think about Opus 4.7 — and whether it’s the right model for your use case.

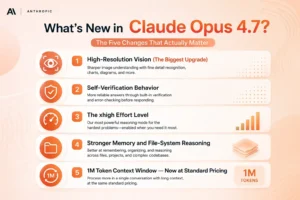

What’s New in Claude Opus 4.7? The Five Changes That Actually Matter

Claude Opus 4.7 is not just a marginal iteration. It is Anthropic’s most capable generally available model to date, performing at the frontier across coding, agentic, and knowledge work capabilities. But instead of listing every changelog item, we want to highlight the five improvements that will actually change how you work.

1. High-Resolution Vision (The Biggest Upgrade)

This is the headline feature most people are underreporting. Claude Opus 4.7 is Anthropic’s first Claude model with high-resolution image support — maximum image resolution has increased to 2576px / 3.75MP, increased from the previous limit of 1568px / 1.15MP.

In practice, this is more than a resolution bump. One early-access partner testing computer vision for autonomous penetration testing reported visual acuity jumping from 54.5% on Opus 4.6 to 98.5% on Opus 4.7 — a 44-percentage-point improvement. For teams working on UI automation, document extraction, or screenshot-driven agents, this changes what’s possible.

2. Self-Verification Behavior

Opus 4.7 introduces a behavioral change that matters more than any benchmark number: the model now catches its own logical faults during the planning phase before reporting back. The model checks its own outputs before presenting them — for complex, multi-step coding tasks where a single error can cascade, this behaviour change is more valuable than marginal benchmark points. We’ve seen this show up clearly in partner results: Cursor reported a jump from 58% to 70% on CursorBench, and Rakuten reported that Opus 4.7 resolves 3x more production tasks than Opus 4.6.

3. The xhigh Effort Level

Anthropic introduced a new effort level that sits between high and max. Previously, the jump from high to max was expensive and blunt — you either got full reasoning or you didn’t. The xhigh level fills this gap, giving developers finer control over the quality-speed-cost tradeoff. Claude Code now defaults to xhigh for all plans. For most demanding coding and agent workflows, we recommend starting here before reaching for max.

4. Stronger Memory and File-System Reasoning

Claude Opus 4.7 is better at writing and using file-system-based memory. If an agent maintains a scratchpad, notes file, or structured memory store across turns, that agent should improve at jotting down notes to itself and leveraging its notes in future tasks.

For long-running agents and multi-session workflows, this is a meaningful reliability upgrade that directly reduces the number of times your agent loses track of what it was doing.

5. 1M Token Context Window — Now at Standard Pricing

Claude Opus 4.7 provides a 1M context window at standard API pricing with no long-context premium.

This matters because competitors like Gemini 3.1 Pro tier up in price past certain context thresholds. With Opus 4.7, you can shove an entire codebase or document library into a single call without a surcharge surprise.

How Does Claude Opus 4.7 Perform on Real Benchmarks?

Numbers without context are just noise. Here’s what the benchmark data actually tells us — and where we need to be honest about Opus 4.7’s limitations.

Where Opus 4.7 Leads

On the benchmarks that matter most to developers and enterprise teams, Opus 4.7 is the current market leader. SWE-bench Verified jumps from 80.8% to 87.6%, a nearly 7-point gain that puts Opus 4.7 ahead of Gemini 3.1 Pro at 80.6%. On SWE-bench Pro, the harder multi-language variant, Opus 4.7 goes from 53.4% to 64.3%, leapfrogging both GPT-5.4 at 57.7% and Gemini at 54.2%.

On knowledge work — the category most relevant to enterprise professionals — the gap is even wider. Opus 4.7 currently leads the market on the GDPVal-AA knowledge work evaluation with an Elo score of 1753, surpassing both GPT-5.4 at 1674 and Gemini 3.1 Pro at 1314.

Tool use is best-in-class — Opus 4.7 leads MCP-Atlas at 77.3%, ahead of Opus 4.6 at 75.8%, GPT-5.4 at 68.1%, and Gemini 3.1 Pro at 73.9%.

For teams building tool-calling agents, this is the single most important benchmark to watch.

Where Opus 4.7 Falls Short

We won’t pretend this is a clean sweep. Agentic search is the one area that slipped — BrowseComp dropped from 83.7% to 79.3%, trailing Gemini 3.1 Pro at 85.9% and GPT-5.4 Pro at 89.3%.

If your agent workload is primarily research-heavy web browsing and synthesis, GPT-5.4 or Gemini 3.1 Pro are stronger options right now.

Terminal-based coding (Terminal-Bench) and multilingual Q&A are also areas where Opus 4.7 does not lead. The honest summary: if your work is coding-heavy, agent-heavy, or vision-heavy, Opus 4.7 is the current best. If it’s research-heavy or raw terminal scripting, it isn’t.

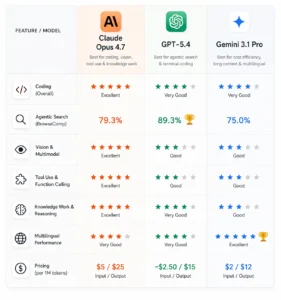

How Does Claude Opus 4.7 Compare to GPT-5.4 and Gemini 3.1 Pro?

This is the comparison every developer team is running right now. Here’s our direct breakdown.

Claude Opus 4.7 vs GPT-5.4

The race is tight — on directly comparable benchmarks, Opus 4.7 only leads GPT-5.4 by 7-4.

GPT-5.4 wins on agentic search (BrowseComp: 89.3% vs 79.3%) and raw terminal coding. Opus 4.7 wins clearly on coding, vision, tool use, and knowledge work. Pricing: Opus 4.7 at $5/$25 per million tokens vs GPT-5.4 at roughly $2.50/$15. Opus 4.7 is approximately 2x the cost on input — and for most coding workflows, that premium is worth it.

Claude Opus 4.7 vs Gemini 3.1 Pro

At $2 per million input tokens and $12 per million output tokens, Gemini 3.1 Pro is dramatically more affordable than both competitors — Claude Opus 4.7 at $5/$25 costs roughly 2.5x more per input token.

Gemini wins on price, context length surcharge behavior, and multilingual tasks. Opus 4.7 wins on coding reliability, vision, and tool use. We’d reach for Gemini 3.1 Pro when bulk processing and cost sensitivity dominate the decision, and Opus 4.7 when quality on each individual task is what matters.

What Does This Mean for Your Stack?

The most practical insight from this comparison: no single model wins everything in April 2026. The mature approach is routing — use Opus 4.7 for complex coding and vision work, Sonnet 4.6 for mid-tier tasks, and Gemini or Haiku for bulk processing. This kind of routing typically cuts monthly API spend by 50–65% versus running everything through a single flagship.

What Is the Real Pricing Story for Claude Opus 4.7?

How Does Claude Opus 4.7 Pricing Actually Work?

The official answer is simple: Opus 4.7 costs $5 per million input tokens and $25 per million output tokens — unchanged from Opus 4.6. But there’s a catch worth understanding before you migrate any production workload.

Opus 4.7 ships with a new tokenizer that can produce up to 35% more tokens for the same input text. Your real bill per request can go up even though the rate card did not.

This is not a reason to avoid upgrading — but it is a reason to benchmark your actual production prompts before committing to a full switch. The quality improvement on complex coding tasks will likely outweigh the token inflation for most teams. For high-volume bulk processing, it won’t.

Cost reduction options are meaningful: up to 90% savings with prompt caching and 50% savings with batch processing. For nightly summarization runs, backfills, or evaluation sweeps, routing through the Batch API makes Opus 4.7 economically viable even at scale.

Who Should Actually Use Claude Opus 4.7?

Is Claude Opus 4.7 Right for You?

Claude Opus 4.7 is worth reaching for when the quality of each individual task directly affects your output — production-ready code, complex multi-agent orchestration, long-form document creation, or vision-heavy workflows. It is probably not the right default for high-volume, cost-sensitive tasks where Sonnet 4.6 or Gemini 3.1 Pro produce equivalent results at a fraction of the price.

Here’s how we’d break it down by role:

Software developers and engineering teams — Opus 4.7 is the strongest choice if your primary work is resolving real GitHub issues, working across multi-language codebases, or building agentic coding pipelines with Claude Code. The 70% CursorBench score and 87.6% SWE-bench Verified result speak directly to this use case.

Enterprise knowledge workers — The GDPVal-AA lead (Elo 1753 vs GPT-5.4’s 1674) makes Opus 4.7 the best available model for document drafting, financial analysis, redlining, and complex document reasoning. The .docx and .pptx editing improvements are particularly relevant.

AI founders and product teams — If you’re building autonomous agents that need to maintain state across long sessions, the improved memory behavior and xhigh effort level make Opus 4.7 meaningfully more reliable than Opus 4.6. Teams at Notion, Hebbia, and Bolt all reported double-digit reliability improvements in their agent workflows.

Researchers and analysts — GPT-5.4 currently leads on agentic web research. If your primary use case is large-scale information gathering and synthesis across many web sources, we’d recommend testing both before committing.

The Elephant in the Room: Claude Mythos

No honest review of Opus 4.7 can skip this. Anthropic publicly acknowledges that they have a more capable model they haven’t released. Claude Mythos Preview scores 77.8% on SWE-bench Pro versus Opus 4.7’s 64.3%, and on most benchmarks, it outperforms everything in this comparison.

Anthropic is holding Mythos back due to safety concerns around cybersecurity capabilities — specifically, the model can find and exploit software vulnerabilities at a level rivalling skilled security researchers.

For most teams, this changes nothing practically today. Opus 4.7 is the most capable publicly available model Anthropic offers, and it’s available now across claude.ai, the Claude API, Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Foundry. But it’s worth tracking — when Mythos does get a broader release, the entire frontier comparison shifts meaningfully.

Frequently Asked Questions

What is Claude Opus 4.7 and when was it released?

Claude Opus 4.7 is Anthropic’s most capable generally available AI model, released on April 16, 2026. It delivers frontier-level performance on agentic coding, knowledge work, and vision tasks, with a 1M token context window and self-verification behavior that catches errors before output.

How does Claude Opus 4.7 compare to GPT-5.4?

Opus 4.7 leads on coding (SWE-bench Pro: 64.3% vs 57.7%), vision, tool use, and knowledge work. GPT-5.4 leads on agentic web research and terminal-based coding. Opus 4.7 costs roughly 2x more per input token. For engineering-adjacent work, Opus 4.7 is the stronger default in April 2026.

How much does Claude Opus 4.7 cost?

Opus 4.7 is priced at $5 per million input tokens and $25 per million output tokens — unchanged from Opus 4.6. However, a new tokenizer can increase token counts by up to 35% for the same text, meaning effective costs per request may be higher. Prompt caching and batch processing offer up to 90% and 50% discounts respectively.

Should I upgrade from Claude Opus 4.6 to 4.7?

Yes, if your work involves coding, vision tasks, or long-horizon agent workflows. If your production prompts are already tuned and your workload is not vision-heavy, it’s worth benchmarking first before a full migration, given the new tokenizer’s potential cost impact.

What is the context window of Claude Opus 4.7?

Claude Opus 4.7 supports a 1 million token context window at standard API pricing — with no long-context price premium, unlike some competitor models.

What is the xhigh effort level in Claude Opus 4.7?

xhigh is a new reasoning effort setting that sits between the existing high and max levels. It gives developers finer control over the quality-speed-cost tradeoff, and Claude Code defaults to xhigh for all plans. For most demanding coding tasks, xhigh is the recommended starting point.

Who is Claude Opus 4.7 best suited for?

Opus 4.7 is best for software engineers working on production codebases, enterprise teams handling complex document creation, and AI founders building autonomous agents. For high-volume, cost-sensitive tasks, Sonnet 4.6 or Gemini 3.1 Pro offer better price-performance.